Working with Avro file format in Python the right way

Here are some quick helpful tips for using Avro file format correctly in python.

Note: I am asumming you familair with Apache Avro file format, its advantages, its shortcomings, etc.

Tip no 1: Use the correct package

Instead of using the official package from Apache Avro use Fast Avro for Python. Trust me the claims made by the author of fastavro mostly holds true.

Tip no 2: Use of schema

Avro relies on schemas. When Avro data is read, the schema used when writing it is always present. You can read the specification docs to understand more about it in detail. I too found it a bit confusing & keep ever forgetting. So here is the rule of thumb that I follow:

schema = {

'doc': 'A dummy avro file', # a short description

'name': 'dummy', # your supposed to be file name with .avro extension

'type': 'record', # type of avro serilazation, there are more (see above docs) but as per me this will do most of the time

'fields': [ # this defines actual keys & their types

{'name': 'key1', 'type': 'string'},

{'name': 'key2', 'type': 'int'},

{'name': 'key2', 'type': 'boolean'},

],

}

Tip no 3: Write correctly

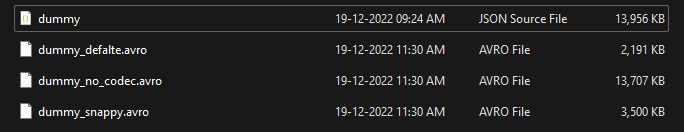

The fastavro default write method for some reason does not use any codec or compression algorithm, which defeats the purpose of using avro. See the below screenshot.

| Format | Size |

| JSON | 13.6 mb |

| Avro (no compression/code) | 13.3 mb |

| Avro (deflate compression/code) | 2.13 mb |

| Avro (snappy compression/code) | 3.4 mb |

from fastavro import writer, parse_schema, reader

# default codec is None

with open('dummy.avro', 'wb') as out:

writer(out, parse_schema(schema), more_rows)

# from the above screenshot its best to use deflate. It also have native supoprt

with open('dummy_deflate.avro', 'wb') as out:

writer(out, parse_schema(schema), more_rows, codec="deflate")

Tip no 3: Read as a generator

Assuming the file size is huge (that will be the case why you had the need to move from JSON to something like Avro) & fastavro do support lazy loading why not use it. Also, it is straightforward

from fastavro import reader

with open("dummy.avro", "rb") as out:

f = reader(out) # now f is generator

# you loop it

for i in f:

do_something(i)